Subjective video quality evaluation involves people making judgments based on observations and experience. Some professional “golden eyes” do this as part of their job.

Now you can test your own skills in a standards-based video quality assessment campaign that is currently being conducted as a web application. Keep reading to learn more about subjective video quality evaluation, or visit https://pqtest.cascadestream.com to learn by doing. There is an incentive to participate which we talk about in the last paragraph.

What is the quality of this video picture?

Measuring Video Quality

Professionals who build systems around video understand that being able to measure video picture quality is a key differentiator. Streaming media service providers want to deliver content to viewers with just the right quality. If it is too good it means excess bandwidth and cost was used to deliver it. If the quality is poor it can have negative effects on brand reputation and subscriber attrition. Either way it is a primary concern for many businesses.

Methods for evaluating video quality can be divided into three categories.

- Golden Eyes. Expert viewers who evaluate video based on their experience and judgement

- Standards-based subjective video quality assessments. These provide a definitive measurement of quality to which the other methods can be compared for accuracy, but they are labor intensive because they involve human evaluators.

- Objective computational models that can be automated for off-line or operational assessment. There are many algorithms available and more under development, all striving to compute quality or impairment on a scale that is meant to reflect human perception

Note that these methods are concerned with evaluation of impairments due to the various video processing steps for distribution, which may add subtle (or worse) blur, compression artifacts, or noise. Severe quality of experience issues such as interruptions in delivery or gross picture impairments caused by dropped network packets are also important but are outside the scope of this article.

Subjective Evaluation Standards

A subjective assessment test session

The standards-based subjective assessment approach has evolved from its standard definition, interlaced broadcast roots. Recommendation ITU-R BT.500-13 [1] is the most widely referenced subjective assessment standard. It provides guidelines for video material selection, viewing conditions, observer training, double-stimulus and single-stimulus test session sequences, and analysis of collected scores. Test sessions were traditionally conducted in controlled laboratory or studio environments, with groups or individual observers, under the supervision of a moderator.

More recent standards such as Recommendation ITU-T P.913 [2] provide updated conditions and methods more suitable for modern UHD displays, and streaming media distribution, and computer support for video playback and test session management.

The objective of the test session is to present a number of video sequences to human observers and collect quality assessment scores. A five point quality scale is commonly used:

- 5 – Excellent

- 4 – Good

- 3 – Fair

- 2 – Poor

- 1 – Bad or Unacceptable

When these scores are averaged over many observers, the result is the Mean Opinion Score (MOS) for each video sequence. The test session guidelines from the ITU recommendations provide the basis to define MOS as the measured quality.

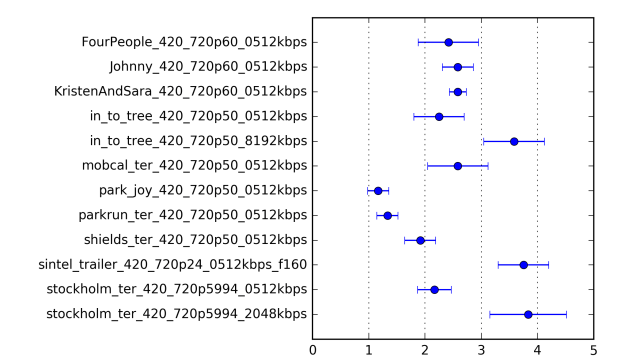

Mean Opinion Scores and 95% confidence interval, from subjective assessment

The subjective evaluation approach is not scalable for operational use, but it does provide the ground truth needed to validate objective quality models that are scalable. For example, the VQEG Report [3] on quality models for HD video describes a rigorous subjective validation process that was conducted in multiple labs worldwide using methods based on Recommendation BT.500. Most objective models use some subjective evaluation assessment approach to demonstrate accuracy.

Modern Subjective Evaluation as a Web Application

A subjective assessment campaign is currently being conducted as a web application. The test session method is the ACR-HR (Absolute Category Rating with Hidden Reference) method described in Recommendation P.913. Subject videos have been impaired by various processing operations including scaling, compression, and filtering. Original undistorted videos are also included for scoring in the test session. During analysis the scores from the impaired stimuli can be subtracted from the score of the corresponding hidden reference to result in a differential score. The averaged Differential Mean Opinion Score (DMOS) is another useful metric for situations where the reference video is available.

The web-based subjective assessment application implements the specifications of Recommendation BT.500 and P.913 as much as possible. For example, there are instructions for controlling ambient room illumination and display calibration. An observer training sequence is presented to anchor the range of impairments represented by the five point grading scale. As allowed in the recommendation, each video sequence is represented in the test session by a single still frame. This allows the test session to proceed more quickly, although it prevents evaluation of certain impairments such as flicker.

Now try it

Go to https://pqtest.cascadestream.com to participate. It’s free. The current campaign is open now through March 6, 2018 March 15, 2018.

The site presented the test and pre-test in a appealing and professional manner

– a recent participant

Your Incentive

As a reward for your time, you will receive a personalized report in a few weeks that will tell how your scores compared to the mean opinion scores. Were you more generous or more critical in your scoring than the average observer? Were your scores more or less consistent than the others? Maybe you can proclaim yourself to be the “Golden Eyes” of your organization based on this report.

References

[1] ITU-R BT.500-13, “Methodology for the subjective assessment of the quality of television pictures,” International Telecommunication Union, Geneva, 1/2012, 44p.

[2] ITU-T P.913(01/2014), “Methods for the subjective assessment of video quality, audio quality and audiovisual quality of Internet video and distribution quality television in any environment,” International Telecommunication Union, Geneva, 1/2014, 25p.

[3] Video Quality Experts Group, “Report on the Validation of Video Quality Models for High Definition Video Content,” http://www.vqeg.org, Version 2.0, June 30, 2010, 93p.